The good

Last week I felt pretty good. I got the Electronjs app up and running with the Electron Dead Link Checker v0.0.1. This week is a stark contrast as I feel like very little of good came out of the code this week.

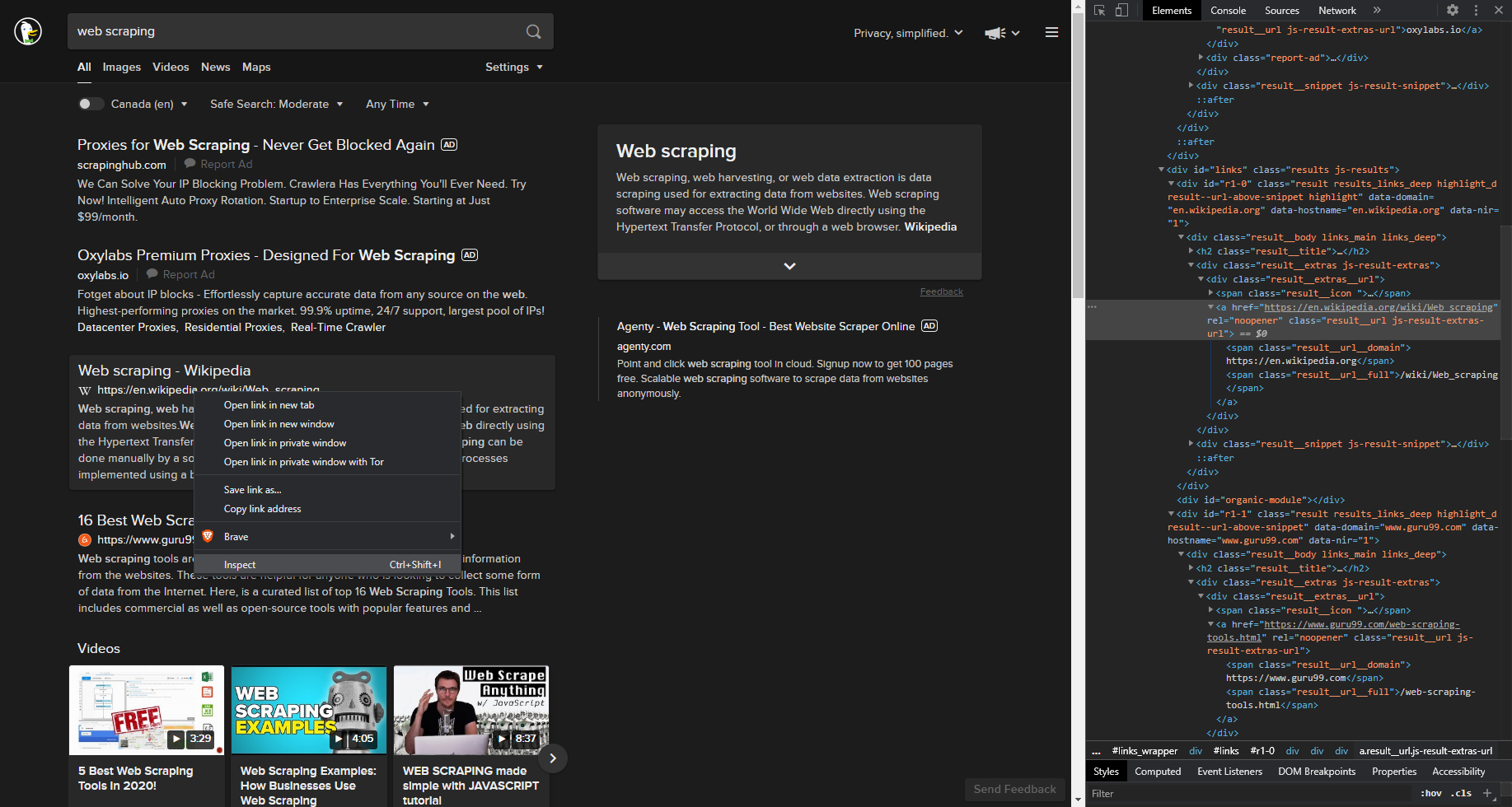

The easiest way to scrape Web sites. ScrapingAnt uses the latest Chrome browser and rotates proxies for you. The easiest way to scrape Web sites. ScrapingAnt uses the latest Chrome browser and rotates proxies for you. The easiest way to scrape Web sites. ScrapingAnt uses the latest Chrome browser and rotates proxies for you. The way you'll do the scraping is by calling win.webContent.executeJavascript to inject the JS screen scraping code into the hidden browser window. That method returns the result of the injected code as a promise. Scraping With NightmareJs Nightmare is a browser automation library that uses electron under the hood. The idea is that you can spin up an electron instance, go to a webpage and use nightmare methods like type and click to programmatically interact with the page. How to Scrape with Electron Because of Electron’s ability to integrate with Node, there are many different npm modules at your disposal. This takes doing most of the leg work of automating the web scraping process and makes development a breeze. My personal favorite, nightmare, has never let me down.

Github

The coolest part is that I found and am starting to use parts of github that I have never used before. They have a trello board like thing that you can easily use to track tasks. I really like having it baked right into my repo where I am doing all my work.

From issues, you can assign a project. Then the board watches that issue and updates its position in the board based on what happens with the issue. Let’s say you resolve an issue (which I discovered can be done from the commit with just a

fixes #<issuenumber> in the commit message; see this for more). That issue will automatically move from where it was to done.You can manage the automation like the above picture shows and decide what triggers what events for the column. Pretty cool.

Display progress

Displaying an elapsed time was a goal of mine with v0.0.2 and while I didn’t finish all I wanted for v0.0.2, I did finish this. An elapsed time serves as both a notice to the user that work is happening and a cool way to track how long it’s taking to complete the task.

Originally I was returning the elapsed time from the dead-link-checker module but then I realized I could just start a timer within my electron app and complete it when the request completed.

Pretty simple. I think it looked a bit better incrementing it every 10 ms and then use the simple math in my html of dividing by

<h4 *ngIf='elapsedTime'>Elapsed time - {{elapsedTime / 100}} seconds.</h4>.Don’t display 999 for timeouts

This is good and bad. Previously I was displaying 999 if I got something wrong without a timeout. Generally the error would be something like

RequestError {name: 'RequestError', message: 'Error: read ECONNRESET', cause: Error: read ECONNRESET which didn’t have a status code. I have been treating them like timeouts and assiging a 999 status code.For this…I cheated. I just looked at if I had a 999 and replaced it with an actual timeout status code, 408. It’s kind of misleading. I’ll talk more about this kind of stuff in the bad section.

Electron app is handling long tasks like a champ

Make A Browser Electron Js

I always worry with any kind of script that the longer it goes, the more likely something will break. I had proven that the script alone could handle long tasks, which one going up to somewhere in the 30 some hours.

I’ve improved the script to not check things like

#comment so that makes it check a lot less links but it’s still hitting upwards of 10k+ links over 10-15 minutes. I’m really pleased with this.Install Electron Js

The bad

Cancel button

Most of my focus was on this. I’m trying to do it with webworkers because this is an area that I would like to get better at. I feel like I spun my tires here. I barely made any progress before I was stopped by an erro that I still haven’t figured out.

If I call

deadLinkChecker directly from my app, no problem. If I call it from a webworker, death:I went through every piece

deadLinkChecker commenting out parts until I could isolate the problem. It seems there is more than one problem but for sure requestPromise is a problem. It would be pretty tough to use this script without requestPromise.This is something that I’m going to keep working on. There must be some way to do this kind of thing. Most of the people that have this problem have it when it’s related to Angular but for me the script works great if it’s called directly from the Angular side. It only crashes like this in the web worker.

Not very reliable yet

This should probably be the highest priority. Having a cancel button is important but a user could always just close the app and then reopen it. Right now it seems that bad links are returning, such as my faked out 408, that are actually fine. Having an occasional of these I think is probably okay. I think a low threshold like 10% may be acceptable and I’m way over that. As such it ruins confidence in the product and kind of makes it useless.

Electron Js Web Scraping Tutorial

I’m not sure why sometimes I will get a 503 from Amazon or a 403 from other random websites, in addition to the weird timeouts I get. It’s possible I’m getting blocked but I really think I can probably work that out in all situations except recaptchas and even a recaptcha should return a 200 status code.

So…that is what I will continue to work on.